1 min read

Here's the recording from our DevOps “Office Hours” session on 2020-03-04.

We hold public “Office Hours” every Wednesday at 11:30am PST to answer questions on all things DevOps/Terraform/Kubernetes/CICD related.

These “lunch & learn” style sessions are totally free and really just an opportunity to talk shop, ask questions and get answers.

Register here: cloudposse.com/office-hours

Basically, these sessions are an opportunity to get a free weekly consultation with Cloud Posse where you can literally “ask me anything” (AMA). Since we're all engineers, this also helps us better understand the challenges our users have so we can better focus on solving the real problems you have and address the problems/gaps in our tools.

Machine Generated Transcript

Let's get the show started.

Welcome to Office hours.

It's march 4th 2020 my name is Eric Osterman and I'll be leading the conversation.

I'm the CEO and founder of cloud posse.

We are a DevOps accelerator.

We help startups own their infrastructure in record time by building it for you.

And then showing you the ropes.

For those of you new to the call the format is very informal.

My goal is to get your questions answered.

So feel free to amuse yourself at anytime if you want to jump in and participate.

If you're tuning in from our podcast or YouTube channel, you can register for these live and interactive sessions by going to cloud posse office hours.

Again, that's cloud posse slash office hours.

We host these calls every week will automatically post a video recording of this session to the office hours channel as well as follow up with an email.

So you can share with your team.

If you want to share something in private just ask.

And we can temporarily suspend the recording.

That's it.

Let's kick this off.

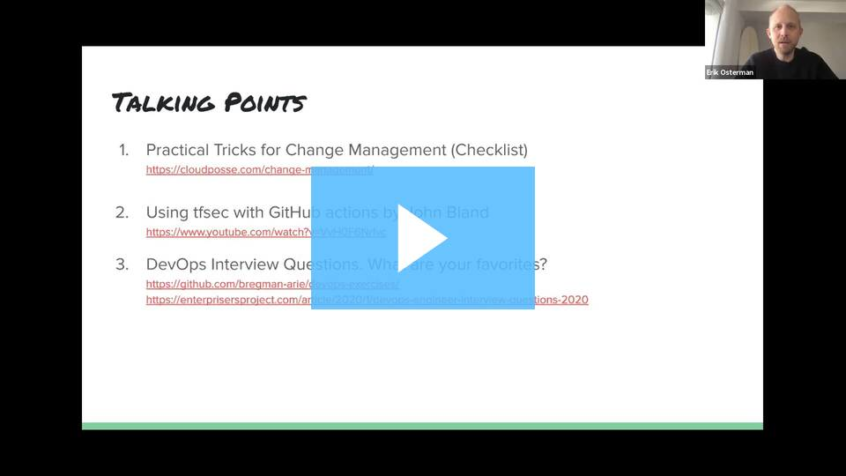

So here are some talking points for today.

They are mostly the same as last week.

There's just a bunch of stuff.

We haven't had a chance to cover because we've had so many good questions.

So if there's ever idle conversation here some talking points.

So before we get to these let's open the floor.

Anybody have any questions problems interesting things they're working on that they'd like to share or ask.

I have a question.

All right.

Go up, down.

All right.

So mostly it can actually be like McFaul took out help developers get through sort of the repeated tasks or build up containers efficiently.

So one thing I started to put more focus on is the way in which we have to attach images.

So I'd like for some of you would go at the get shot at the part of the image taken as well as any particle stutters that you guys find that works for you best for tagging the images.

But the images all the naming convention.

Yeah Yeah.

So while we've been practicing mostly as part of our pipelines is that every push of the repo builds a Docker image and tags it with the get shot.

And the short shot.

Honestly we never use this short hash.

We almost always just use the long commit hash and the way we use that is then for separate pipelines.

So for example, if we have a separate pipeline that kicks off down the road and cuts a release like 3 to 2 to three, what we'll do that pipeline will look up the artifact for that commit shore and tag it.

If there is no artifact then that pipeline fails.

So basically, we decouple the building of images and artifacts from the process of retagging those images with the commission.

Now I see a couple different patterns happen here.

And it depends a little bit of what your continued delivery or deployment strategy looks like in companies that don't practice strict assembler for their releases because they want more like just a streamlined process of things hitting master then automatically going to staging pride or other environments.

Those companies tend to use shores for that instead of using similar.

There is still a way to use ember with that, which is kind of nice where if you do if you cut if you make similar part of your commit history.

So you have like a release file release date gamble.

What we've seen in our pipelines then that apply what's in the release that gamble on merge to master.

So then it'll do that for you, which is kind of nice because then you have a totally get driven workflow for cutting releases a little bit more rigid in the sense that you can't just use the release functionality on GitHub then if you want to be consistent in any of your questions there.

Yeah, you did get it.

So additionally, I must see a full adaptation of replacing the maintainer type Docker file with C using labels which gives you a bit more or still take what can be tied up started to use labels to also get shot within it.

What a reversion as well along with actual builds.

Yeah you see that happening there.

So I think that that is an excellent idea.

If you are able to surface that information as part of your c.I. system and your Docker registry and assuming that it helps perhaps your team or others reconstitute what happened.

So this is a big part of code fresh is code fresh uses these labels and images extensively.

So tagging the image or labeling the images.

If I pass labeling the images.

If what you call it.

If your security scanning see the vulnerability scanning passes labeling it with perhaps build time.

So it almost makes a registry like the source of truth for all that extra metadata about that image and that follows that image around wherever it goes.

How have we been practicing it.

It's not been something we've had we invested in.

But I don't think it's a bad idea.

If it's become a project for you.

Yeah, it just start to look closely at it.

Actually I didn't like build times to it.

Vendor ID.

Oh, yeah.

So exactly.

You're on it.

Yes What I'm going to do.

I'm doing it by passing a bill argument.

Yeah So I'll get that.

Environment verbal.

See I am just.

That's the right way to do it.

And where this gets interesting is then if you I mean, obviously, this stuff is only as secure as your registry and is only as secure as your ability to add those labels or preclude systems or processes for labeling it.

But assuming that that process is secure.

This is a great way to also then enforce policies on what gets deployed inside of certain clusters based on those labels.

I'm not sure.

So I'm pretty sure you're using something like that was a twist lock.

We'll let you do that.

And I'm not sure if there's a way of doing it with OPM right now, but maybe somebody else if somebody else knows the answer to that.

Let me know.

Awesome any other follow up questions to that or other questions.

Who are you doing.

Vulnerability scanning right now deal on those images.

Yes this is quite by the personal side of it that we don't use to the hub or existing cortical testing.

So we admit it, but bill time within our history for other images as well as the runtime, which is something that I like.

Just like everything encapsulated them against other ceilings as well as it also has a kitchen like this with vault to execute the runtime but it doesn't look pretty good.

Well, we have to look into possibly replaced just luck like Claire Falco.

So we're exploring that.

But you know put covered as part of that.

Have you explored the east yas Container Registry scanning and how it compares feature wise, and how effective it is by comparison.

Yeah So this is actually it was clear that they are using clear under the are using.

I don't know the level of control that we do get into everything.

But I'm thinking if we do it in duration.

So long.

So we'll get the reporting aspect of it.

But again, that just be based on just what you're offering what I saw it it didn't seem to be much.

Did you see that kwe has also been open source.

Now Yes.

Yeah Yeah well that compares so one thing I'd like to see in any system like that would be the ability to track kind of the meantime resolution for a CV in the system.

So like you don't want to shut down the service because city is suddenly detected there and caused the blackout.

But you do want to track how quickly that.

How long that persisted and until and when was a result.

That's something that you guys are factoring in as well.

So we've had this year where or communication time varies because we have a private resource that keeps just doing that.

So we've been actually some teams and then we'll try to not limit what's possible.

So yeah.

So without a public plan that we have to put this out there not to build but develop a set of resources behind that, which is must start feeling.

So there's a lot of background noise that where you're Dell.

Any chance.

I know.

I know you're always in a well planned environment.

Yeah much in a caucus space that's like today.

OK How much was a bigoted are all taken by the lifetime squatters there.

Yeah Yeah I know that fighting for the phone booths.

All right.

Well, let's see.

Maybe maybe it quiets down a little bit.

There's a question from Casey Kent.

He's been part of the community for a while now.

Yes, he asks the question on jack.

You touch on the set up and best practices with FFK stack on Kubernetes.

If you have some time.

Sure certainly I can point you in the right direction in that case.

So this is very common question that comes up in the community.

Unfortunately, our office hours notes are not properly tagged on like what we talked about or what to do.

Just be aware of that past office hours have talked about this on how to set it up the.

So the efk stack for everyone.

That's the Elasticsearch flew into and cabana stack.

It's become pretty much the most common open source alternative for something like Splunk or Sumo Logic.

So what we would recommend in this case is, first of all configuring floor d not to log directly to your cabana sorry to your Elasticsearch instances because it's so easy to overload Elasticsearch, and when Elasticsearch is unhappy it's really unhappy and it takes a long time to recover.

Also scaling your Elasticsearch clusters.

So you can send a firehose to them is very expensive.

So what you're going to want to do is set up your fluent d to log directly to it like it can easily stream if you're on the W us, which I think you are.

So if you If you drain too if you send all your logs from fluid directly into cornices Guinness is going to absorb those as fast as you can.

And then you have an excellent option to drain that to S3.

So you're going to want to send those log from isas into S3 for long term storage and you can have all of the lifecycle rules and policies there.

We have some great modules on cloud posse for it like a log storage bucket that helps you manage those lifecycle rules very easily.

So you can consider that.

And then the other thing you're going to want to do is drain it for real time search into Elasticsearch.

So both these modes are supported by the Terraform provider by the Terraform resource for this.

I've taught my head to forget exactly what it's called, but it will write directly into S3 and Elasticsearch.

So then the last thing is for like for certain things you'll be able to use Athena if you want to query the data in S3.

So long as I think your query is complete what is it 30 minutes and then 4 for developers and stuff like that.

They have the build time access to the logs inside of elastic.

Now I want to point out one other thing that we've had a lot of success with that.

I like is that there's a little utility.

It's called cube century.

I think there's two there's two options for this.

There's two open source projects and what it'll do is it'll take all your events happening from the Kubernetes event log it and ship those into century century is the exception tracking tool.

And now what's cool is you see the most common exceptions bubbling up to the top.

The most common events and things happening.

And you can assign those two teams to look into using all the conventions that you have in century.

So centuries also open source or if you're using the hosted version that works as well.

So Casey was at a good overview of the way the architecture for setting that up.

Cool So he says that was what he was looking for.

And we're also two other notes.

I mean, where we're typically using the elect managed Elasticsearch by AWS and that also comes with cabana out of the box.

So if you know the path.

There's some path to it.

I forget what it is something.

But if you know that, then you can just access cabana directly there.

You know, a lot of people speak very highly of elastic code and there hosting of Elasticsearch being more robust newer versions newer releases of Elasticsearch.

So that's a consideration as well.

I just.

And it can be controlled with Terraform like everything else.

The challenge there is if your organization has kind of a blank check to use your services.

Now sadly you've got to go get another vendor approved and maybe that's why you wouldn't use it.

All right.

Any any other.

Oh Andrew, I haven't seen you around.

Good to see you join today.

Where've you been I've been busy.

Well, so How's your.

Any interesting news to share with your projects there side projects perhaps dad's garage.

No, not really.

Not so much other than the.

I got that I got my team on board.

So we're going to work on it.

Oh, excellent.

I was not I was not able to get them on board with open sourcing our work.

But maybe someday Yeah but we're going to build it out.

Yeah I like my company in general.

It's not I wish we did more.

Yeah, I think it's very difficult to go from closed source to open source.

And it makes the in-house counsel very uneasy about that.

But if you can get them to agree that certain new projects will be open sourced from the start maybe components like modules and stuff.

The more clear cut cut and dry path to open space.

It sounds like we're there.

Well, I've got art.

I have our chief intellectual property council on board.

I have our vice president on board.

And it just has gotten pushed over to the back foreigner burner.

And it's really a shame because I'm so passionate about it that every few weeks.

I send out a you know, an email on this thread that has been going back for months now.

And like, hey, what's the status on this.

Oh, now what.

So when I was at CBS Interactive.

I was leaning up the cloud architecture over there.

And that was one of my big drives was getting an open source policy an open source initiative at CES.

And yet, I think it took the better part of a year before we were able to open source one project out of that.

Anybody else have any experience helping your organization open source code.

You have.

It's difficult, but it can be like pulling teeth Yeah, we're able to do any of that at your last place John.

No, there was talk of doing it.

But you know just getting that ball rolling this couple a little utility things here and there.

But you know, to get them to understand the value add of open sourcing is quite difficult. Yeah, I actually this is blaze.

I blaze.

Hi So it turns out Mike.

So I've been working at sumo for the last year, and they're pulling back on their open source initiatives.

Really Yes.

In fact, I am officially looking for another job.

Oh, yeah.

Anybody looking for a community evangelist ladies is your guy here.

That's Sumo Logic.

Yeah Yeah, that's where I was.

Yeah, they have I mean, I sort of get it.

They didn't say as much.

But they wanted to an IPO.

So they're basically just not making any investments that don't have a direct immediate payback.

Yeah, I think it's long term, it's probably a strategic mistake mistake because they want to have a bigger presence in the cloud native environment and the competition is just eating them up.

Yeah, that's an interesting one.

I hope, though, that.

And obviously, this is being reported.

And we shouldn't talk about anything we shouldn't talk about.

So let's just keep that in mind.

But the there open source agent.

I mean, I think it's great that they're the Sumo Logic collector is open source.

Hopefully they continue to invest in that.

I know that a number of companies are frustrated sometimes with missing log events and that the agent can be consuming considerable resources just to consume all those logs and stuff like that.

So I think the more people looking at it, the better.

No, actually they're going to be.

I think there's no question that they're less interested in investing in the collection because they don't really see it as their you know the installed agent.

So maybe relying more therefore on third party agents like fluency Yeah bloated that makes more sense.

Yeah Lee and Prometheus are huge parts.

Although interestingly, they have a relatively limited participation in those projects.

But yeah.

So in terms of things being recorded.

So far everything's fine.

They've been very transparent as far as I can tell about that.

But I think that what would work well for me is someone who really wants to get as much adoption of their product as possible.

So if you guys know anybody who is like super aggressive about developer outreach and making sure that their stuff is easy to use, and it works well Yeah, I'm kind of in a little bit of a bind quite honestly, because I think you know for years at Yale for years at Google three years, it suddenly changes somebody you know it's just not the same.

And I find that if people aren't willing to get into continuous improvement.

And if they're not committed to excellence.

I end up getting into trouble by making suggestions or rubbernecking right away.

Well, I think one thing to look look out for though, is if you do want to work more with open source is look for a company that started that way rather than trying it out to see if they could get more customers.

That's such a good point.

Thank you.

Because in the form they start out that way it's built into their DNA.

You can't really undo that.

But the latter.

It's kind of like instant zero.

Look at that sounds obvious.

Yeah Yeah.

So there.

I think there's a number.

Well, one company comes to mind.

I don't know.

You can check out cube cost the cube cost general see if they're looking for anybody.

They have open for being there and doing some interesting things.

Just hearing a lot of fair winds as well.

Yeah fair ones as well.

Yeah core product base camps coming out with their new hey product for email.

So And I don't think you get more open source than the creator of Rails.

Oh my god.

I would give my left nut to work for base camp.

They've been hiring for a while.

So you might want to take a picture put that on the billboard.

All right.

So any other questions related to cloud parsing repos or DevOps in general or best practices or surveys.

Do you want to get a pulse on what other people are doing.

There's a great chance we've got about 70 people on the call right now.

So haven't been in the last three weeks standing up some Amazon queue isn't particularly around network brokering.

And I tried to use the cloud Osi model.

But I couldn't really control the config file in any way.

And I'm having to write a lot of custom tooling around setting variables and making it result in Excel that doesn't blow up.

So yeah let me talk about that in queue module just for a second.

So all of our modules are borne out of actual engagements customer engagements and then we open source that we have this kind of open source first model where we start the modules open source.

This This the use of active Q was for a enterprise Sas product that we were running on prem.

And it didn't work with the it turned out not to work well with the Amazons and service.

So we had to cut back on it so that you know so therefore, they continue to invest.

We haven't had a reason to continue investment on that.

But I will say maxime on my team we had two weeks ago or three weeks ago, we had 130 open pull request against our tariff modules.

And I think we've gone this down to like 13 or something.

So if you do want to spruce it up.

Do you see any ways we can improve it.

Let us know also in terror.

Let me see.

My guess is that module is still each sealed one not each sealed two and some of the template.

Some of the template file manipulation was really basic right in each cell one.

So if we wanted to do any more advanced parameters of that file you would have not been feasible in each cell and one with a CO2.

Now I think it's totally feasible.

So we could have a better, more powerful config that you could pass there or just provide an escape hatch and that you provide the raw x amount.

That's helpful.

I'm not directly familiar with that module right now.

So I might be misstating some things.

But it didn't clear anything up or there additional thing a feedback you have on that.

And that was pretty much it at this point, I've had to pretty much read everything from the ground up.

And if I can figure out any ways to piece it any of that out of there.

And send it back.

Your way, I'd love to do that.

Yeah, for sure.

I feel free.

And this goes for anyone here.

If you have anything you want to contribute back.

You're not sure about the next steps to start on that.

You can always reach out to me on the sweet op slack do you have to join the black team, by the way.

That's a good chance to promote that for a second.

So if you go to slacked suite ops you can join our Slack team.

And then my name's Eric on there you can find me Eric cool Casey Kent asks in the chat common patterns for machine learning infrastructure for continuously training ingesting data and ETF.

There are there's just a ton of stuff out there.

But it'd be nice to hear what you suggest.

So I can't speak to this personally as a subject matter expert.

I can describe a pretty common architecture pattern that one of our customers is using at a very high level.

But I'm not sure if that's even valuable.

You probably already know to that degree.

What I would say there's the whole suite of obviously Amazon's products for content for training the models for machine learning.

We've not touched or looked at it.

Maybe the people here on the select team have been more with us.

Anybody have some context said I zoned out for the beginning of our question.

But have you checked out completely at cloud plaza has not yet worked in Q4.

Yeah, there's a bunch of different UI or API centric different tools.

I did a sort of Kubeflow workshop at a meetup at some point.

That was the extent of my knowledge and thought I was pretty useful for that beginning part.

And then one of the things is you can plug-in different platforms for how you want to host it.

Once you get the model built specifically models that get retrained to lots like marketing models that have seasonality that you want to run a refit over and over again, something like an investment and composing to make sense.

But if it's model you train a few times, then there's like dozens of different ways to do it.

None of which I've been super excited about.

But definitely if you're not sure where to start with your Q4 itself for a typing Q4 versus then you'll see all the other ways, it seems like a good start.

Yeah, I had one thing I mentioned about conference in town.

Yeah scale.

I think is this week.

Oh, yeah.

Thank you for bringing that up.

That's a good tip.

So if you're in Los Angeles or you're close enough scale is happening towards the end of this week.

I think it starts on maybe Thursday.

Yeah And runs through Sunday.

And then there's DevOps days on Fridays.

I'm going to be a devil these days this Friday at the Pasadena convention center.

Pretty much all day.

So if you're there, please hit me up on Slack and I will find a time to meet up for coffee or hang out.

Are you going to go Todd.

I'm not feeling well enough to go bummer.

My kids at home.

I'll be there Friday and Saturday.

Who that.

Sorry I'll be there got an awesome dog.

Thanks for letting me know.

Dude hit me up on Slack.

If you aren't around.

It's enough.

I mean, you bring up a serious note there, though, that a lot of conferences are being canceled like they're dropping like flies right now.

The conferences and Google canceled their ads and you can Amsterdam just got canceled this morning.

Oh, really.

Yeah delayed Kucinich three months.

Yeah some of exactly some of them are postponing them or postponing indefinitely.

So it's too bad.

I'm going to take my chances and see extreme isn't there.

Probably but the you know bless their hearts.

The scale team works for basically no team no for no team for no pay.

And a very minimal minimal budget for a conference of that size.

Some of the some of the equipment for that reporting is pretty dated.

Eric it's Adam Watson.

Hey not to add anything but Pasadena declared a state of emergency an hour ago.

So just a heads up.

All right.

Hopefully that doesn't need like the messenger.

You automatically have jurisdiction to cancel all conferences and stuff.

So Yeah, that's worth checking out to see if that's going to affect scale at all.

Yeah, just said that that was an hour ago.

Figured I'd float that.

Yeah, thanks Adam for bringing that up.

Nobody shoot the messenger.

On the topic of events.

I think nobody in this group is in Boston.

But if you know any people in Boston.

I'm related to knock at a stream that they try to record the talks as well.

So if I can record them for a friend observed 2020.

It's like a CMC s open telemetry related event that a friend of mine tried to put on.

So it's April 7th.

So my hope is that we can get through the like curve and then it will be back down by then but we'll see.

Worst case, we'll try to figure out rescheduling but the link in the observer shot.

So what's that what's that 24 hours of DevOps conference.

Forget what it's called that that might be our future.

What was that thing.

And that was like in December or something.

Yeah And it was last like November last year all date have UPS.

Yeah, there's a couple of those not related to dev apps that have done the far thing.

Not not my type of conference organizing for a.

I like sleeping occasionally.

Yeah And I do like meeting people face.

Actually I mean, honestly, the reason why I go to conferences is to talk to meet the people and hear their stories less the actual talks themselves.

All right.

Any any other specific questions or otherwise maybe I'll jump into practical tricks for change management and get your feedback there for what you've done.

Let's see here.

No, this came up.

I forget who it was that asked for some ask and asked the community at large kind of what you're doing for change management and change control.

I wanted to kind of inventory those tips and tricks to provide guidance because I think just saying, you know just using GitHub isn't enough just having IAM policies isn't enough just having cloud trail audit logs isn't enough.

So what are the things that you have in place for change control and here's kind of a list of some of the things that came to mind as a common best practices today.

So I guess the obvious thing Like, is to bring up obviously having a version control system.

This is your get out.

This is you get lab or bucket.

This is what allows you, if you're practicing infrastructure as code, then to point to the code that should have resulted in a change along the process here.

The next one being infrastructure as code defining the business logic of your infrastructure and using reusable modules for that.

So there's one thing just to write infrastructure code like raw Terraform resources.

But then I do want to capture that a module like a tier from module or help chart is a discrete unit of business logic, which you can kind of sign off organizationally on that this is how you do things.

And then reduce the scope of change control when you're using reusable components there, especially ones that you've signed off on in the organization automation.

Obviously taking what you have now in source control and having a way of getting humans out of the equation because humans are difficult to automate but source control is easy to audit and thinks that anything that is machine control automation, you can continuously refine and improve and have controls in place.

Pull request workflow.

So basically how you enforce that every change is reviewed and approvals on that and related to that.

Having approval steps within your pipeline.

So you might have all the checks and balances in your get out with branch protections and code owners requiring certain checks to pass and a certain number of reviewers.

But in the end, you might want to have still additional controls that are arbitrary and having the ability to have approval steps in your pipelines is an excellent way to have control over when things change and visibility when they change notifications.

I'm sure everyone.

I'm sure a lot of people here are already sending a lot of this stuff to slack.

One thing that we've really liked.

I was surprised how much I liked it was the ability to add a get up comments on pretty much any comic shot.

And then you had that history there.

So if you have a pull request, you can also comment on that on commits and see when that pull request windows commits and that request was deployed into what environment.

So that provides a nice living record changes.

So as I was talking about earlier is kind of using branch protections.

This is very, very, very, very much key to enforcing when stuff change.

So this is something GitHub supports very much.

I'm not I'm less familiar with get lab in this bucket.

Any users here using get lab and big bucket.

How much of the branch protection functionality do they do they have compared to get a bit assuming open source are paid both.

And if you can make the delineation that be great between a recall.

Yes So my expert with lab is that it does actually have the enforcement.

I think can actually set up by bit by default.

Then it starts with oh you can set it up organizationally.

That's nice.

Yeah, that really sucks that.

You can't do that with GitHub.

Yeah, I believe, get lab.

So I have the most experience with on prem get lab open source and I believe I believe free.

Get lab is actually different.

It gives you more on prem get lab open source gives you you can't merge if the pipeline hasn't passed.

But it does not give you a pull request approvals.

No you've got to pay for it.

Wow you've got to pay for the poor credit approvals.

It's the very bottom tier it's only like $4 per user per month.

Yeah, but you got to pay for the poor credit approvals.

I'm not sure about the get left.

So what.

OK, that's good.

That's good.

Does that does get lab have the concept of code owners.

I think that's a good thing, isn't it.

Isn't that just to get thing.

Know what I mean is while entered.

But it's got to be enforced at the pool request approval.

So code owners relate to approvals.

Yeah Yeah Yeah.

OK I got.

So get lab does allow her branch merge protection controls who can merge you have maintainers, developers and maintainers or no one was a role.

And then you also have control who can push through it.

OK with the same role.

Yeah, that's Yeah, that's correct.

Absolutely Yeah.

So code owners.

Is this where you can basically have a file.

And that file will map a team to a path on the file system of that repository.

So you can say that anything in your Terraform IAM project, for example, has to be signed off by SEC ops.

Example get lab does support code owners in the bottom tier of.

Not freedom.

OK, cool.

So in the starter or bronze tier, which is the $4 a month per user.

So the next step is kind of everything we've discussed so far is a little bit at the mercy of your business solution.

Then we get the ability to enforce policies and policy enforcement has been a really hot topic getting a lot of attention especially towards the end of 2019 and I think it's going to even get bigger.

Now in 2020 with tools like open Policy Agent contest, which builds on p.a. and TFC like the tools at your disposal to enforce broader level policies that make it easier to administer change control at an organizational level are reaching greater maturity still early days, but it's at a point.

It's usable now.

And some great videos and demos out there of it.

In fact, we have one on top second.

A basic example that John whipped up will link to John were you.

Did you want to talk about sex today or does she just share that video.

It's up to you.

OK, I guess she another team to an flat.

Like a show.

OK big question came I forgot to ask.

But yeah.

Can I show a quick example.

Who any users interested in seeing a demo right now of a t sac t opsec is a purpose built static static analysis tool for Terraform to enforce policies on your code there.

And using that together with action.

All right.

We got a thumbs up from Adam.

Yeah, sure.

OK before I do that, I would actually add a line to use version pinning that using sender when it comes to some things like Helm charts is not even enough.

You have to use shots.

But that is a valid point.

Let me just add that to Ken you can you write what you said in the officers channels so I don't forget it.

And I'll update this with that with some of the caveats there because there are some caveats like timber is only as good as the maintainers ability to practice it.

And the problem with like Helm is that many maintainers don't actively bump their members.

So they're constantly squashing their version.

And that's the problem.

Like I could push up, one that one.

And you can use it.

And then I could push up another one that one that one with changes.

And you could add in another good point here is that symbol is not cryptographic fully secure versus using Sean's are.

So it's much.

It has been shown you can if you are really bent on Messing people up.

You can probably find some version of a history to cause duplicate Shaw or something.

But generally, it's secure or as John recommends you can tag if you're using some tagging scheme Yeah.

Plus plus the Shah.

That might.

Yeah So that's about this endeavor to add something to the end there.

So the hard thing was some very especially if you're looking at a repository is knowing which one of those is the specific version that I want and putting the version number in there kind of makes that a little bit easier.

You still have to dig into the specific child.

But it can help by tagging on the shot in.

That's good.

Thanks Yeah.

Thanks for telling me that.

It's more of a security topic than it is a changed man.

Yeah, absolutely.

It's just a question of yourself.

Yeah, I think it's hard to have one without considering the other.

Oh, sure.

Damage control.

So this list here is not exhaustive.

So my this was I whipped this up in about 20 minutes.

So if anybody has you know points out what you know things that basically, I want to add to this with things that you're doing and recommendations you have.

So please, if you maybe add to the thread here.

There's a link.

I posted with the change management in the office hours channel.

You add any suggestions.

There is a threat.

I will try and incorporate those into this.

All right.

So John, are you setup.

Awesome I got to hand over the reins and we're going to get a little nice here.

You know accidents.

This was this is unscripted and unplanned.

So forget it.

We will thank the dental gods for a successful.

Yeah, exactly.

Cheering so I kind of wanted to go through.

I guess I should share here right.

I kind of wanted to go through in terms of what TFC and Teflon are kind of showing the actions here as opposed to waiting for the actions to run and things.

But kind of speaking through that.

So one of the questions that came up in the chat after the video was posted was about using TF land as opposed to TFC.

And so you have sick essentially as static processing static analysis for you Terraform.

So it has a set of rules.

It's not super exhaustive, but it does have a pretty good set of rules of things that you want to watch out for, especially along the lines of like security groups security rules out on the internet.

So I have some basic Terraform here.

This meant to fail.

So I have a spider block that's wide open.

Nothing really specific there.

CDP missing some configuration here.

And this and Azure managed disk is actually set to false.

So in running t of SEC here and expand this a little bit.

It basically looks at my code and determines hey, this site or blog actually should not be wide open.

This actually you should use HGTV as not ETP this one here is actually missing a VPC configuration.

And this one needs to be secured or encrypted given out.

But there are those times where you actually need something like if it's a web server right.

You need to actually utilize this open CIDR block here.

So it has the syntax to where you can actually tell it to ignore one of these one or more.

And you can see it actually is missing that one.

Now Same thing with you.

Laughs thanks you too.

Yes things like that.

But what you see here is that there wasn't a catch on my specific t linked code over here that is utilizing a T12.

So the T12 extra large is a size that does not exist.

Right So if I run t offline here it doesn't catch any of these security issues, but it did catch that my specific instance type is invalid.

So I think there's not a direct one to one comparison to say, hey, you should use Teflon instead of TFC.

I think they both are useful for different purposes, even though there may be at times a little bit of overlap.

But as you can see, I'm using a ws ball.

I just have a shortcut to a B because I don't like typing a lot.

But if I put in like I did wrong.

Am I. It actually is talking to a ws so I like to set my region and all that.

So it's actually talking to a ws and looking to see is this a valid.

So there is a little bit of a cost here in the sense of like speed.

So if you hook it up to like a premium it hook or something like that.

It may take a second, depending on how big your actual tariff on project actually is.

But it's definitely a pretty good.

How well does it work.

If you're using like almost exclusively modules and stuff like that.

I think it's actually operating at the resource level it does.

But they actually do have modules support.

And I started working to kind of get this up and I'll add another video that goes through these fully, but it actually can check a module to see if the actual types exist and actually go into the module to make sure that the resources in the modules actually are valid.

That can have a little pro and con depending on the open source module.

But you may be using.

There may be some issues there.

But the good thing is that they do have this ignore flag that you can use there as well as a full ACL config file that you can use.

And you can tell it to ignore certain modules in here provider of our files variables specific credentials and also tell it to disable certain rules.

But the rules are actually, I think it's 700 or something rules.

Yeah 700 plus rules wow that's a lot.

Yeah Yeah, that's good.

That's cool.

Are you able to add additional rules.

Yeah, they actually have a way to configure it actually didn't go through that part.

But there's a way to extend it beyond just the basic configuration.

Yeah, they give you get an action run that you've teed up.

We're almost out of time.

I think so.

I'm sure we have time to go through the full run.

But I do get some and so I have this one for the TFC SEC which was basically the same thing that we just looked at there.

So now we actually go to the other one.

So let's see a seconds here.

This is one of the passing runs.

It's very simple.

It's not a lot that is happening.

It's basically just running TFC.

But the configuration usage is basically just this.

There are configuration changes that you can add in here variables those sort of things.

But this will pretty much run the 45 SEC on the current directory and let you know whether or not it's passing or failing.

You can do it on the PR as well.

Of course.

But it's very useful to actually get a heads up that something actually happened at this time.

I know I can't see it because it's not signed in.

And this is browser.

But it does give before output.

So related.

Also relate to this you keep your training to SEC repo that's open.

That's public right.

Yes Yeah.

Yeah All of those are public.

Yeah So this is.

So we'll share that officers.

This is the full output here.

So yeah, it's very useful.

Very quick to set up.

Very easy to use, and it definitely will help catch some of those issues that you just may miss.

Where I see this being exceptionally valuable is if you're practicing a traditional get workflow where you deploy on merge to master meaning that I've already lost the recourse to make any corrections by the time you've already merged and you want to mitigate the failures after merge to master.

So I think this is a really nice way to avoid that.

If you're not applying before merge to master, which is like at length.

This workflow.

Exactly And especially with, like TFA land here.

If I just fat finger that.

And it's not like some malicious issue whenever I run it, I find out, oh, I actually have an issue here before I go through and apply it just as useful to find that stuff out as early as possible in terms of like a software development cycle.

Something else that I've looked at in this space.

I haven't had a chance to use it yet, but it looks very interesting is you can use opa to evaluate Terraform yellow and contests.

So the contest builds on opa in a more opinionated way as well.

So that's kind of cool.

The example, they gave us is actually kind of a useful one.

Yeah the example, they give is you know your cut.

You have decided that you don't want your Terraform scripts to be too big and you create an opa policy that says you're not allowed to create more than x number of resources with one Terraform apply and opa runs in your pipeline or whatever.

But before you apply and can actually stop you from.

The term you like to use is blast radius.

You know your blast radius has to be smaller than a certain limit.

Yeah which actually has as I work on it because I work on the first apply where you may be generating it.

It creates it looks at the plan.

It doesn't look at the Apply at like it.

It will create a play a Terraform plan and then look at the plan.

Yeah why does value.

Well, I like it.

I wonder how well it works in practice.

But yeah in principle, I like it.

The reason why is like you using a lot of modules and modules you modules modules and it's very easy.

The plan doesn't care.

Well right.

When you create it when you do Terraform plant even if you're just doing modules.

It's going to tell you exactly what's actually being.

Yeah but it's created the convention.

But usually I'll have 25 resources, at least getting graded if I'm using a module, for example, anything that does something more serious is going to be creating a lot of resources.

So the cold start problem like once it's been provisioned I can imagine that we don't want to see too many new resources created all the time.

But let's finish it up from scratch.

I wonder like I agree with small pull requests.

But even a small pull request can have a big plan.

Yeah, we're almost at the end of the hour, we got 5 minutes left any questions.

Related maybe to the staff for the security of plants of John D. What would you say the learning curve is on over here.

I can't speak from firsthand account.

It's in line with the rest of the industry like to pretend it is.

And it's high along with everything else in there.

I think it's very readable.

But like any Arrigo reads it.

I think the hardest part about it is developing opinions that can be codified.

Into policies, not what like.

We know we want to do it.

What should we actually be doing.

Like that's the hard part.

The language is super readable very easy.

You'll pick it up in less than a half hour or something.

But get that with the same Walker actually you know where you to look like predefined plots.

That would make sense.

Like a use case.

Yeah, that was right where that's done.

Start small though.

I mean, we're starting.

We haven't really used it much.

But the first thing we're going to do is we're going to use it to create a mutating admission controller that all it's going to do is check that every pot in the cluster has a label with a Charge Code so that we can track back for billing Reverend.

That's all at us like that's all it's going to do.

Yeah quite a small win.

Let's go.

And that kind of a policy Agent would deter the use of the policy is a little different, right.

Because you wouldn't be doing that at Ci would you.

Well, you're doing this that that would go into a mutating edition controller.

Inside the cluster.

But you can use that you can use up it that way.

Mm-hmm Oh but opa can be used.

I mean opus plus great.

OK You can use Rigo with opa all over the place.

Yeah, it's I think he's giving some enterprise vendors a run for the money.

We're trying to do policy stuff.

Why I could tell you for sure.

Hershey corp. sends the sentinel.

It's very much so kind of locked in that same vein without Opie.

And I don't use it much.

But probably much for other issues.

OK Just scanning it real quick.

There's the home page open Policy Agent dot org has a tiny little example for an emission controller.

It's eight lines one right.

Yeah, it's eight lines that checks that it's eight lines and in those eight lines it checks that all pods come from trusted registries.

Wow That's good.

That's great.

That's a that's a really that's a great policy there.

I can see it to have.

And similarly to that it would be like even if you need to use public images that you're pushing them either to private ones or you have all through to you that entered in their graphics specifically how armor.

Yeah all sorts.

Yeah And it's just a common engine for it.

I like that coming was pretty good.

And because it's a resource we can all build on it as a community just like Terraform and Helm registries and stuff like that.

All right, everyone looks like we've reached the end of the hour.

And that about wraps things up for this week.

Remember to register for our weekly office hours if you haven't already, go to cloud posse office hours.

Again thanks for sharing everything.

John for the live demo there of the SEC and tee off lint stuff that was really interesting recording of this call will be posted in the office hours channel and syndicated to our podcast at podcast.asco.org dot cloud posse.

See you next week.

Same place, same time.